New Approach to Regression with R: Regression analysis is one of the most commonly used techniques in data analysis. It is a powerful tool for predicting outcomes and understanding the relationship between variables. However, the traditional approach to regression analysis has limitations that have led to the development of new techniques. In this article, we will explore a new approach to regression with R that addresses these limitations.

Introduction

In this section, we will introduce the topic of regression analysis and its importance in data analysis. We will also discuss the limitations of the traditional approach to regression analysis.

What is regression analysis?

Regression analysis is a statistical technique used to explore the relationship between a dependent variable and one or more independent variables. It is used to predict the value of the dependent variable based on the values of the independent variables.

Importance of regression analysis

Regression analysis is an important tool in data analysis because it helps us understand the relationship between variables. It can be used to predict outcomes and identify important factors that affect the outcome.

Limitations of the traditional approach to regression analysis

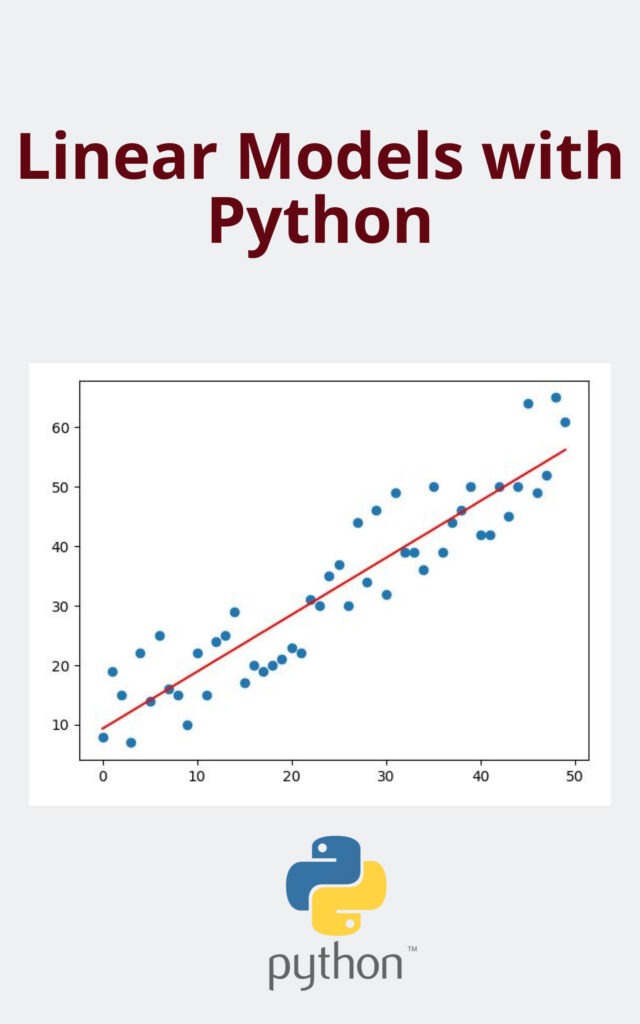

The traditional approach to regression analysis has limitations that can make it difficult to interpret the results. One of the main limitations is that it assumes a linear relationship between the dependent variable and independent variables. This means that the relationship between variables is assumed to be constant across all values of the independent variables. Another limitation is that it assumes that the errors are normally distributed.

The new approach to regression with R

In this section, we will introduce the new approach to regression with R that addresses the limitations of the traditional approach. We will also discuss the benefits of using this approach.

Non-linear regression

The new approach to regression with R allows for non-linear relationships between the dependent variable and independent variables. This means that the relationship between variables can change depending on the values of the independent variables. Non-linear regression models are more flexible than linear regression models and can provide a better fit to the data.

Generalized linear models

The new approach to regression with R also includes generalized linear models. Generalized linear models allow for non-normal distributions of the errors. This means that the errors can be skewed or have heavy tails. Generalized linear models are more flexible than linear regression models and can provide a better fit to the data.

Bayesian regression

The new approach to regression with R also includes Bayesian regression. Bayesian regression allows us to incorporate prior knowledge into the analysis. This can be useful when we have some knowledge about the relationship between variables before we start the analysis. Bayesian regression can also provide more accurate predictions than traditional regression models.

Benefits of the new approach

The new approach to regression with R has several benefits over the traditional approach. It allows for non-linear relationships between variables, non-normal distributions of errors, and the incorporation of prior knowledge into the analysis. This makes it a more flexible and accurate tool for data analysis.