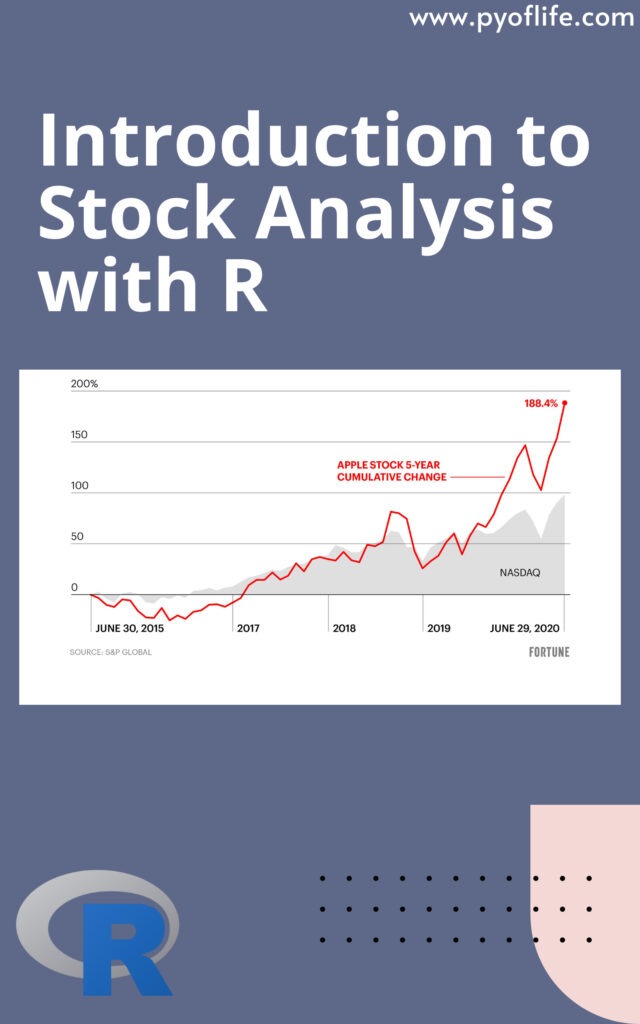

Introduction to Stock Analysis with R: Stock analysis serves as the compass for investors navigating the labyrinth of financial markets, guiding them toward informed decisions amidst the sea of data and fluctuating trends. In this digital age, where data reigns supreme, technology integration has revolutionized stock analysis. Among the myriad of tools available, R stands out as a powerful ally for investors, offering a robust platform for conducting comprehensive stock analysis.

What is Stock Analysis?

Stock analysis is the process of evaluating the financial performance and market dynamics of publicly traded companies. It encompasses a spectrum of techniques aimed at deciphering the intrinsic value of stocks, assessing market trends, and predicting future price movements. By scrutinizing financial statements, economic indicators, and industry trends, analysts aim to unearth investment opportunities and mitigate risks.

The Role of R in Stock Analysis

R, a versatile programming language and software environment, has emerged as a stalwart companion for analysts delving into the depths of stock analysis. Renowned for its flexibility and extensive library of statistical tools, R empowers analysts to crunch vast datasets, visualize complex relationships, and develop predictive models with unparalleled precision. Its open-source nature fosters a vibrant community of developers continuously enriching its repertoire, making it an indispensable asset in the arsenal of modern-day investors.

Getting Started with R

Embarking on the journey of stock analysis with R begins with the installation of R and its integrated development environment (IDE), RStudio. These tools provide a user-friendly interface and many resources to kickstart your exploration. Familiarizing oneself with the basics of R programming, such as data structures, functions, and control flow, lays the groundwork for navigating the intricacies of stock analysis.

Data Acquisition

At the heart of stock analysis lies data – the raw material from which insights are distilled. R facilitates seamless data acquisition from diverse sources, be it online databases, APIs, or local files. Leveraging packages like quantmod and tidyquant, analysts can import historical stock prices, financial statements, and economic indicators, priming the canvas for analysis.

Example:

# Install and load necessary packages install.packages("quantmod") library(quantmod) # Define the stock symbol and the date range stock_symbol <- "AAPL" start_date <- "2015-01-30" end_date <- "2020-05-29" # Fetch stock data getSymbols(stock_symbol, src = "yahoo", from = start_date, to = end_date)This example fetches historical stock data for Apple Inc. (ticker symbol: AAPL) from January 1, 2020, to January 1, 2023, using the quantmod package.

Visualizing Stock Prices:

Once you have obtained the stock data, it’s beneficial to visualize it to gain insights into the stock’s price movements over time. R offers powerful visualization libraries like ggplot2 for creating customizable and insightful plots.

Example:

# Plotting stock prices plot(as.xts(AAPL$AAPL.Close), main = "AAPL Stock Prices", ylab = "Price (USD)")This code generates a simple time series plot of Apple’s closing stock prices over the specified time period.

Performing Basic Analysis:

After visualizing the stock prices, you can perform basic analysis to understand key metrics such as returns, volatility, and moving averages. R provides functions and packages to compute these metrics efficiently.

Example:

# Calculate daily returns daily_returns <- diff(log(AAPL$AAPL.Close)) # Compute rolling 30-day volatility volatility <- rollapply(daily_returns, width = 30, FUN = sd, align = "right", fill = NA) # Plot volatility plot(volatility, main = "30-Day Rolling Volatility", ylab = "Volatility")This code calculates daily returns and computes the rolling 30-day volatility of Apple’s stock prices, providing insights into the stock’s risk profile over time.

Conclusion

In the ever-evolving landscape of financial markets, stock analysis with R serves as a beacon of insight, guiding investors through the tumultuous seas of uncertainty. Armed with a potent arsenal of statistical tools and a spirit of exploration, analysts navigate the labyrinth of data, uncovering hidden gems amidst the chaos. As technology continues to advance and new frontiers emerge, the symbiotic relationship between R and stock analysis will continue to flourish, empowering investors to navigate the volatile seas of the market with confidence and clarity.

Read More: Forecasting: Principles And Practice Using R