In the rapidly evolving field of biology, the ability to analyze and interpret data is becoming increasingly critical. As biologists dive deeper into complex ecological systems, genetic data, and population trends, traditional statistical methods alone may not be enough to extract meaningful insights. That’s where “The New Statistics with R: An Introduction for Biologists” comes into play, offering biologists a practical, hands-on guide to mastering modern statistical techniques using the versatile programming language R.

This book is not just for statisticians. It’s for any biologist who wants to harness the power of data analysis to fuel their research. Whether you’re dealing with small datasets from controlled laboratory experiments or large datasets from environmental studies, this book will equip you with the tools to draw robust and reliable conclusions.

Why Use R for Statistics in Biology?

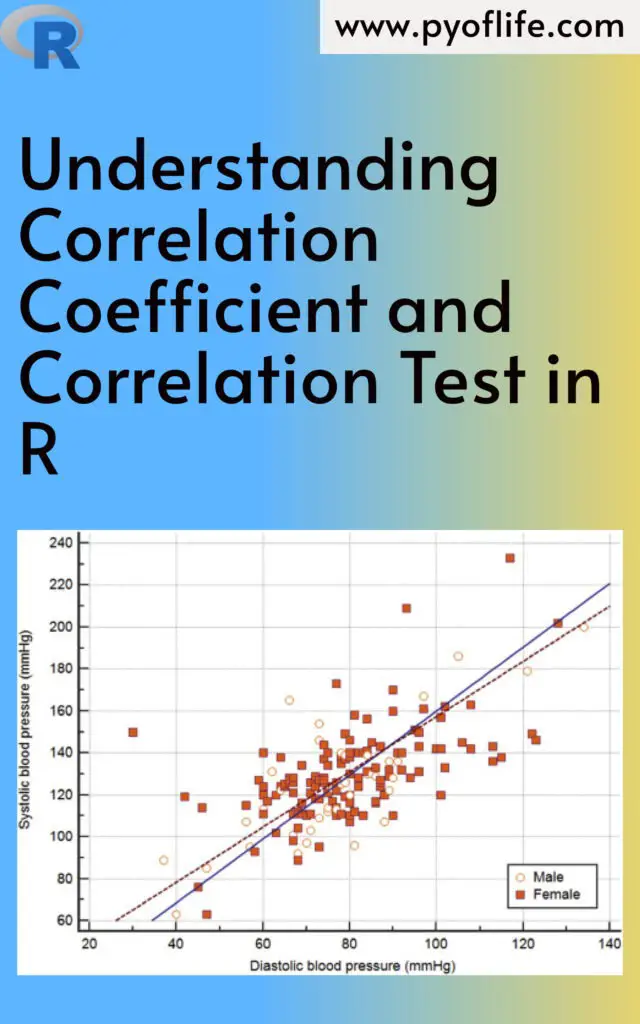

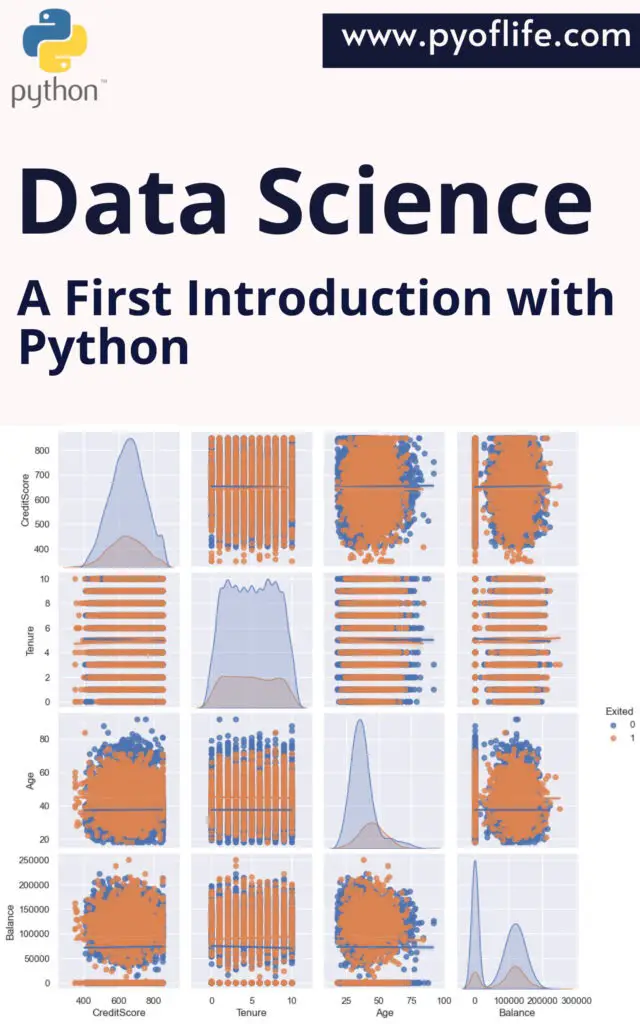

R is a powerful, open-source programming language that has become the go-to tool for data analysis in the biological sciences. Its versatility allows users to handle a wide range of tasks, from data wrangling to advanced statistical modeling, and it’s especially well-suited for visualizing complex biological data. Moreover, its extensive library of packages makes it perfect for tackling both basic and advanced statistical problems, such as hypothesis testing, regression, or Bayesian modeling.

What is “The New Statistics”?

The “New Statistics” refers to a shift from the over-reliance on traditional null hypothesis significance testing (NHST) toward a broader framework that includes effect sizes, confidence intervals, and meta-analysis. These approaches focus on estimating the magnitude of effects and quantifying uncertainty, offering a more nuanced understanding of biological phenomena. In contrast to NHST, where a p-value determines whether an effect is “significant” or not, the New Statistics encourages biologists to think about the size and practical importance of effects, rather than just statistical significance.

Key Features of the Book

- Introduction to R: The book starts with the basics of R, making it accessible to those who may not have prior programming experience. It covers how to set up R, write simple commands, and load datasets for analysis. This sets the stage for biologists unfamiliar with coding to comfortably dive into more advanced concepts.

- Core Concepts in Statistics: Fundamental concepts such as descriptive statistics, probability, and inferential statistics are explained in a biological context. The book introduces both parametric and non-parametric techniques, ensuring that the reader is well-versed in the most appropriate statistical methods for various types of data.

- Effect Size and Confidence Intervals: One of the highlights of the New Statistics is its emphasis on effect sizes—quantifying the strength of a relationship or the magnitude of an effect—rather than just focusing on whether the effect exists. Confidence intervals give a range of values that are likely to contain the true effect size, helping researchers gauge the precision of their estimates.

- Hands-on Examples: The book is packed with biological examples, helping readers understand how statistical methods apply to real-world data. Let’s walk through one.

Example: Estimating the Impact of Fertilizer on Plant Growth

Imagine you’re studying the effect of different fertilizer types on plant growth, and you’ve gathered data on the height of plants after four weeks in both fertilized and unfertilized conditions. Instead of just running a t-test and reporting a p-value, the New Statistics approach would have you focus on estimating the effect size—how much taller, on average, the fertilized plants are compared to the unfertilized ones.

You might load your data into R like this:

# Sample data

plant_data <- data.frame(

group = c("Fertilized", "Fertilized", "Fertilized", "Unfertilized", "Unfertilized"),

height = c(15.2, 16.8, 14.7, 10.3, 9.8)

)

Next, calculate the mean height for both groups:

mean_height_fertilized <- mean(plant_data$height[plant_data$group == "Fertilized"])

mean_height_unfertilized <- mean(plant_data$height[plant_data$group == "Unfertilized"])

effect_size <- mean_height_fertilized - mean_height_unfertilized

effect_size

The difference in means provides an estimate of how much taller plants grow with fertilizer. But rather than stopping there, you would also calculate the confidence interval for this effect size, giving you a range of values that is likely to capture the true effect in the population.

In R, this can be done using the t.test function:

t_test <- t.test(plant_data$height ~ plant_data$group)

t_test$conf.int

The output will give you both the estimated effect size and a 95% confidence interval, providing a fuller picture of the data.

Example: Bayesian Approach to Population Trends

One of the key strengths of R is its ability to handle advanced techniques such as Bayesian statistics, which are becoming more prominent in biological research. Suppose you’re analyzing the population trend of a specific bird species over 10 years. Instead of traditional regression methods, you might opt for a Bayesian approach that allows you to incorporate prior knowledge or expert opinions about population growth.

Using the rstanarm package in R, you can model the trend as follows:

# Simulating data

year <- 1:10

population <- c(50, 55, 60, 70, 65, 80, 90, 85, 95, 100)

# Bayesian linear regression

library(rstanarm)

fit <- stan_glm(population ~ year)

summary(fit)

This approach not only estimates the relationship between years and population size, but it also provides credible intervals, which offer a Bayesian alternative to confidence intervals. These intervals give you a range within which the true population trend lies, based on both the data and any prior assumptions.

Benefits of Learning from This Book

- Improved Statistical Literacy: Biologists will gain a deeper understanding of modern statistical methods, making their research more credible and reliable.

- Reproducible Research: The emphasis on using R promotes transparency and reproducibility, which are increasingly important in scientific research.

- Versatility: Whether you’re interested in genetics, ecology, or evolution, the statistical techniques in this book are applicable across a wide range of biological disciplines.

Final Thoughts

“The New Statistics with R: An Introduction for Biologists” is an invaluable resource for anyone in the biological sciences looking to improve their data analysis skills. It doesn’t just teach you how to perform statistical tests; it teaches you how to think about data in a way that is more robust, meaningful, and aligned with modern scientific standards. By integrating real-world examples with practical R applications, this book ensures that biologists at all levels can better analyze their data, interpret their results, and make impactful scientific contributions.

Whether you’re a seasoned biologist or a student just getting started, this book will help you embrace the power of data, transforming how you approach biological research.

Download: Biostatistics with R: An Introduction to Statistics Through Biological Data