Beginning R: The Statistical Programming Language: R is a powerful, open-source programming language specifically designed for statistical computing and graphics. With its extensive range of packages and libraries, R has become a popular choice among researchers, data scientists, and statisticians. In this article, we will explore the basics of R and its applications in statistical programming.

Installation and setup of R

Before diving into R, you need to install it on your computer. The installation process is straightforward, and detailed instructions can be found on the official R website. Once installed, you can launch the R console or an integrated development environment (IDE) such as RStudio to start coding in R.

Basic syntax and data structures in R

R has a concise and expressive syntax that makes it easy to perform various operations on data. It supports different data structures like vectors, matrices, data frames, and lists. Vectors are one-dimensional arrays of data, while matrices are two-dimensional arrays. Data frames are tabular data structures, and lists can contain elements of different types.

Functions and control structures in R

To perform complex operations in R, you need to understand functions and control structures. R provides a wide range of built-in functions for common operations like mathematical calculations, data manipulations, and statistical analyses. Control structures like conditional statements (if-else), loops (for, while), and user-defined functions allow you to control the flow of code execution and create reusable code.

Data manipulation and analysis with R

One of the key strengths of R is its ability to handle data manipulation and analysis tasks. It provides functions to read and write data from various file formats, clean and preprocess data, and perform exploratory data analysis (EDA). R also offers numerous statistical analysis techniques, making it a versatile tool for data scientists.

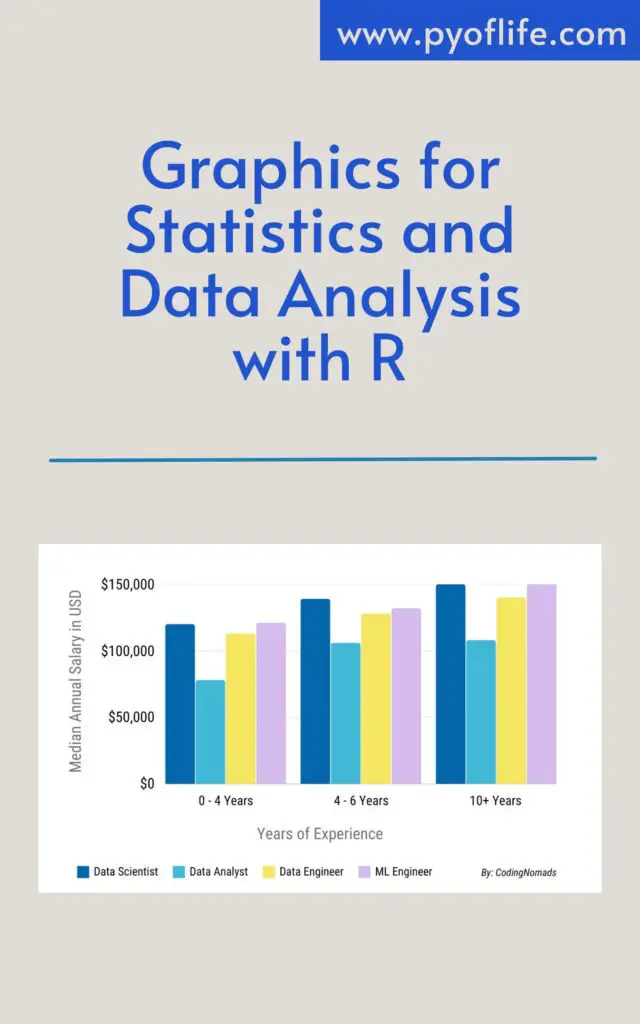

Visualizing data is an essential aspect of data analysis. R offers a wide variety of packages and functions for creating static and interactive visualizations. You can create basic plots like scatter plots, bar charts, and histograms using the base R graphics system. Additionally, packages like ggplot2 provide a more expressive and customizable approach to data visualization.

Introduction to statistical modeling with R

R provides a rich ecosystem of packages for statistical modeling and machine learning. Linear regression, logistic regression, and decision trees are some of the commonly used techniques in statistical modeling, and R offers specialized libraries for implementing these models. You can easily fit models, make predictions, and evaluate the performance of your models using R.

Working with packages and extensions in R

R’s functionality can be extended by installing and loading packages from the Comprehensive R Archive Network (CRAN) repository. These packages provide additional functions and tools for specific tasks such as text mining, time series analysis, or image processing. Learning how to use packages effectively is crucial for harnessing the true power of R.

R and its integration with other tools and languages

R can be integrated with other tools and languages to augment its capabilities. For example, you can combine the strengths of R and Python by using packages like reticulate, which allows you to call Python code from within R and vice versa. R also has seamless integration with SQL databases, enabling you to fetch and process data directly from databases. Furthermore, integration with big data tools like Hadoop enables R to handle large-scale data processing.

Resources and further learning opportunities

To master R, there are various resources available, including books, tutorials, online courses, and interactive platforms. Books like “R for Data Science” by Hadley Wickham and Garrett Grolemund and “The Art of R Programming” by Norman Matloff are highly recommended for beginners. Online learning platforms like Coursera, DataCamp, and Udemy offer extensive R courses taught by industry experts. Engaging with online communities and participating in data science competitions can also enhance your learning experience.

Conclusion

R is a versatile and powerful programming language for statistical computing and data analysis. It provides a wide range of functionalities, making it a go-to tool for researchers, statisticians, and data scientists. By understanding the basics of R and its diverse applications, you can unlock the potential of statistical programming and take your data analysis skills to new heights.

Read More: Beginning Data Science in R: Data Analysis, Visualization, and Modelling for the Data Scientist