Data Analysis with Microsoft Excel: Data analysis is an essential part of any business or research project. It helps you to make informed decisions and understand the patterns and trends in your data. Microsoft Excel is one of the most widely used tools for data analysis, thanks to its versatility and user-friendliness. In this article, we will explore some of the basic and advanced techniques you can use to analyze data in Microsoft Excel.

- Sorting and filtering data:

Sorting and filtering are basic features that help you organize and narrow down your data to a specific range. To sort your data in Excel, select the data range, click on the Data tab, and then click on the Sort icon. Choose the column you want to sort by and select either ascending or descending order.

Filtering is used to display specific data within a range. To filter your data, select the data range, click on the Data tab, and then click on the Filter icon. You can then select the column you want to filter and choose the specific criteria for the filter.

- Pivot tables:

Pivot tables are a powerful tool for analyzing large amounts of data. They allow you to summarize and aggregate data based on different criteria. To create a pivot table in Excel, select the data range, click on the Insert tab, and then click on the Pivot Table icon. You can then choose the columns you want to include in the pivot table and drag and drop them into the appropriate areas of the pivot table.

- Conditional formatting:

Conditional formatting is used to highlight specific data based on certain conditions. For example, you can highlight all the cells that contain a value greater than a certain threshold. To apply conditional formatting in Excel, select the data range, click on the Home tab, and then click on the Conditional Formatting icon. You can then choose the formatting rules you want to apply.

- Charts and graphs:

Charts and graphs are a great way to visualize your data and identify patterns and trends. Excel offers a wide range of chart types, including column charts, line charts, and pie charts. To create a chart in Excel, select the data range, click on the Insert tab, and then click on the chart type you want to create.

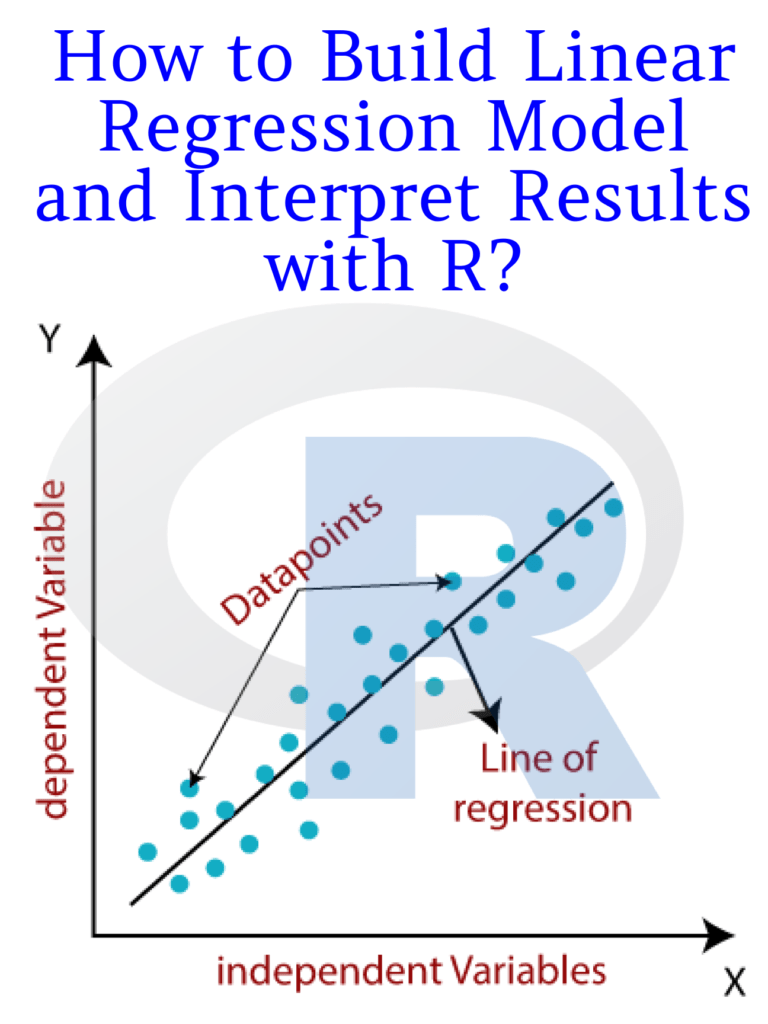

Regression analysis is a statistical technique used to analyze the relationship between two or more variables. Excel provides a built-in tool for performing regression analysis. To perform a regression analysis in Excel, select the data range, click on the Data Analysis icon in the Data tab, and then choose Regression from the list of options.

Microsoft Excel provides a wide range of tools and features for data analysis. By mastering these tools, you can analyze your data more effectively and make informed decisions based on your findings. Whether you are a business professional or a researcher, Excel is a powerful tool that can help you unlock the insights hidden in your data.