In today’s digital era, data analysis is crucial in various fields, including college-level mathematics and statistics. With the advancement of technology, tools like R have become instrumental in handling complex data sets and performing statistical analyses. This article explores the significance of R in college mathematics and statistics, highlighting its capabilities and benefits.

1. Understanding the Basics of R

1.1 What is R?

R is a programming language specifically designed for statistical computing and graphics. It provides a wide range of tools and libraries that enable efficient data analysis, modeling, and visualization. Developed by statisticians, R has gained immense popularity among researchers, data analysts, and educators due to its flexibility and extensive capabilities.

1.2 Why is R essential for college mathematics and statistics?

R offers a comprehensive platform for students and educators to explore and apply mathematical and statistical concepts. Its open-source nature allows easy accessibility, making it an ideal choice for college-level courses. By using R, students can gain hands-on experience in analyzing real-world data, enhancing their understanding of mathematical and statistical principles.

2. R’s Data Manipulation and Visualization Features

2.1 Data Manipulation with R

R provides powerful data manipulation capabilities through libraries like dplyr and tidyverse. These libraries offer functions for filtering, transforming, and summarizing data, allowing students to clean and preprocess datasets efficiently. With R’s intuitive syntax, students can perform complex data operations effortlessly.

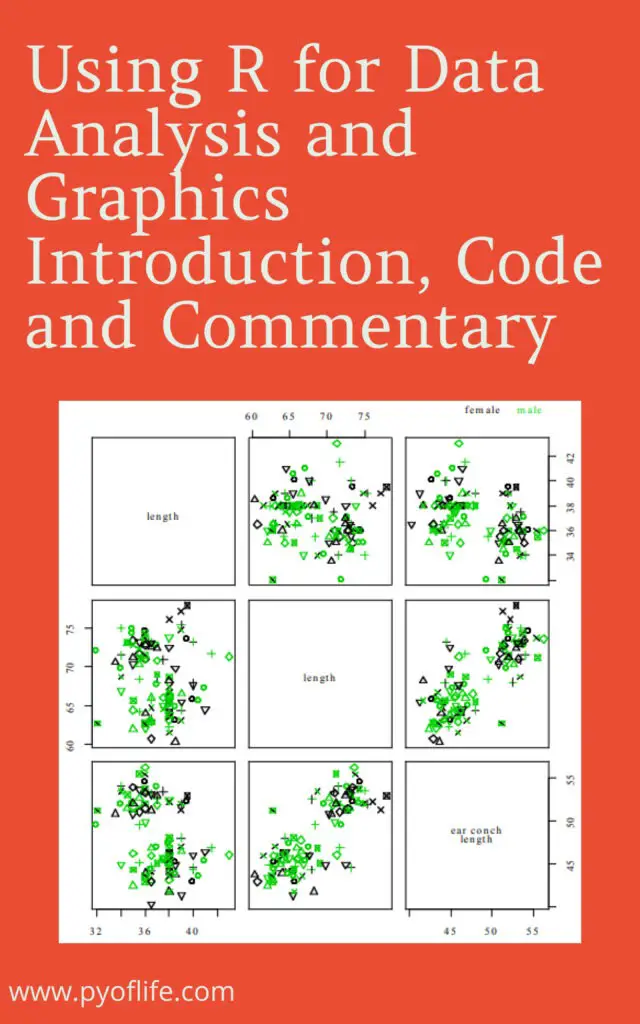

2.2 Data Visualization using R

Visualization is a crucial aspect of data analysis, aiding in the interpretation and communication of findings. R provides several libraries, such as ggplot2, that enable the creation of compelling and insightful visualizations. Students can generate various types of plots, charts, and graphs to explore patterns, relationships, and distributions within the data.

3. Statistical Analysis with R

3.1 Descriptive Statistics with R

R offers a wide range of functions for descriptive statistics, allowing students to calculate measures like mean, median, standard deviation, and correlation coefficients. These statistical measures provide a summary of the data and help students understand its central tendencies and variability.

3.2 Inferential Statistics Using R

Inferential statistics involves making predictions or inferences about a population based on sample data. R provides numerous statistical tests and models for hypothesis testing, regression analysis, analysis of variance (ANOVA), and more. Students can leverage these tools to gain insights from data and make informed decisions.

4. Integrating R into College Courses

4.1 Incorporating R in Mathematics Classes

By integrating R into mathematics classes, educators can create a dynamic learning environment that promotes active engagement and practical application of mathematical concepts. Students can explore mathematical models, simulate scenarios, and analyze data using R, fostering a deeper understanding of mathematical principles.

4.2 R in Statistics Education

Statistics education can greatly benefit from incorporating R as a tool for data analysis and statistical modeling. R’s vast array of statistical functions and visualization capabilities enable students to explore real-world datasets, perform hypothesis testing, and create statistical models. This hands-on approach enhances students’ statistical reasoning and problem-solving skills.

5. Benefits of Using R in College Mathematics and Statistics

5.1 Enhanced Learning Experience

Using R in college mathematics and statistics courses provides students with a practical and immersive learning experience. They can apply theoretical concepts to real data, gaining a deeper understanding of mathematical and statistical principles in action. This active learning approach fosters critical thinking and problem-solving skills.

5.2 Real-world Applications

R is widely used in various industries for data analysis and statistical modeling. By learning R in college, students acquire a valuable skill set that is in high demand in the job market. The ability to analyze and interpret data using R opens doors to career opportunities in fields such as data science, market research, finance, and more.

5.3 Career Opportunities

Proficiency in R can significantly enhance students’ career prospects. Many organizations require professionals who can effectively handle data, perform statistical analyses, and communicate insights. With R expertise, students can pursue careers as data analysts, statisticians, and research scientists, or even pursue further studies in the field of data science.

6. Conclusion

R is an invaluable tool for college-level mathematics and statistics. Its extensive capabilities in data manipulation, visualization, and statistical analysis empower students to explore mathematical and statistical concepts in a practical and engaging manner. By integrating R into college courses, educators can equip students with the skills and knowledge needed to excel in data-driven fields.

FAQs

Q1: Is R difficult to learn for beginners?

Learning R can be challenging initially, especially for those with no prior programming experience. However, with practice and dedication, beginners can grasp its fundamentals and gradually build their proficiency. Many online resources, tutorials, and communities are available to support the learning process.

Q2: Can R handle large datasets?

Yes, R is capable of handling large datasets through various optimization techniques and packages specifically designed for efficient memory management. With proper coding practices, R can efficiently process and analyze datasets of substantial size.

Q3: Are there alternatives to R for statistical analysis?

Yes, there are alternative programming languages and tools for statistical analysis, such as Python, SAS, and SPSS. However, R’s extensive library ecosystem and its popularity among statisticians and researchers make it a preferred choice for many in the field.

Q4: Can I use R on different operating systems?

Yes, R is compatible with major operating systems like Windows, macOS, and Linux. It provides consistent functionality across platforms, allowing users to seamlessly work with R on their preferred operating system.

Q5: Is R only useful for mathematics and statistics?

While R has its roots in mathematics and statistics, it has evolved into a versatile tool used in various disciplines. R is widely employed in fields like data science, bioinformatics, finance, social sciences, and more. Its flexibility and extensive libraries make it applicable to diverse domains.