Introduction to Rand RStudio

Using R and RStudio for Data Management: When it comes to data science and statistical analysis, R and RStudio are considered to be powerful and versatile tools. By combining the capabilities of the R programming language with the user-friendly interface of RStudio, it offers a comprehensive solution for managing data, performing statistical analysis, and generating graphics.

Understanding Data Management with Rand RStudio

Importing and Exporting Data

One of the fundamental tasks in data analysis is importing data from various sources. With Rand RStudio, users can seamlessly import data from CSV files, Excel spreadsheets, databases, and other formats. Likewise, exporting processed data is equally effortless, enabling seamless collaboration and sharing of insights.

Data Cleaning and Transformation

Before delving into analysis, ensuring data cleanliness and coherence is paramount. Rand RStudio provides a myriad of functions and packages for data cleaning and transformation. From handling missing values to standardizing data formats, it empowers analysts to preprocess data efficiently, laying a solid foundation for subsequent analysis.

Statistical Analysis with R and RStudio

Descriptive Statistics

R and RStudio offer an extensive array of functions for descriptive statistics, allowing analysts to summarize and explore datasets comprehensively. From calculating measures of central tendency to visualizing distributions, descriptive statistics in Rand RStudio facilitate a deep understanding of data characteristics.

Inferential Statistics

Beyond descriptive statistics, R and RStudio empower analysts to easily perform inferential statistics. Whether hypothesis testing, regression analysis, or ANOVA, R and RStudio provide robust tools and packages for conducting rigorous statistical inference, enabling users to derive meaningful insights and make informed decisions.

Creating Graphics with R and RStudio

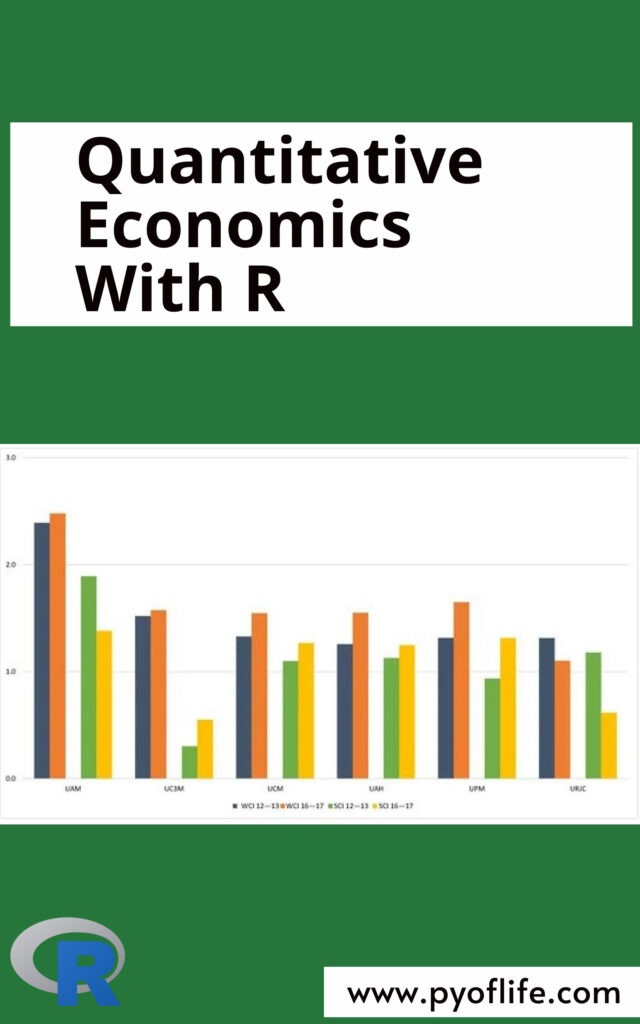

Basic Plots

Visualizing data is essential for gaining insights and communicating findings effectively. R and RStudio offers a rich suite of plotting functions for creating basic plots such as histograms, scatter plots, and bar charts. With customizable features and intuitive syntax, generating informative visualizations becomes a seamless endeavor.

Advanced Visualizations

For more sophisticated graphics, R and RStudio doesn’t disappoint. Whether it’s creating interactive plots, heatmaps, or 3D visualizations, R and RStudio offer advanced packages and tools to cater to diverse visualization needs. From exploratory data analysis to presentation-ready graphics, R and RStudio equips analysts with the means to convey complex insights effortlessly.

Integrating Rand RStudio with Other Tools

R and RStudio’s compatibility with other tools further enhances its utility. Whether it’s integrating with Python libraries for machine learning or connecting to cloud-based platforms for big data analysis, R and RStudio facilitate seamless interoperability, enabling users to leverage a diverse ecosystem of resources for their analytical endeavors.

Best Practices for Efficient Data Management and Analysis

To maximize the effectiveness of Rand RStudio, adhering to best practices is crucial. This includes maintaining organized project structures, documenting code and analyses, leveraging version control systems, and staying updated with the latest packages and techniques. By embracing these practices, analysts can streamline their workflow, enhance reproducibility, and foster collaboration within their teams.

Conclusion

In conclusion, R and RStudio stand as a formidable ally for data management, statistical analysis, and graphics generation. Its versatility, ease of use, and robust functionality make it a preferred choice among data scientists, analysts, and researchers worldwide. By harnessing the power of R and RStudio, analysts can unlock valuable insights from data, drive evidence-based decision-making, and propel innovation across various domains.

Read More: Using R for Introductory Statistics