In today’s data-driven world, regression modeling has become a cornerstone of predictive analytics, enabling businesses and researchers to uncover insights and make data-backed decisions. Understanding regression modeling strategies is essential for building robust models, improving accuracy, and addressing real-world complexities.

This article dives into the core concepts, strategies, and best practices in regression modeling, tailored for both beginners and advanced practitioners.

What Is Regression Modeling?

Regression modeling is a statistical technique used to examine the relationship between a dependent variable and one or more independent variables. It predicts outcomes, identifies trends, and determines causal relationships in a variety of fields, including finance, healthcare, and marketing.

Popular types of regression models include:

- Linear Regression: Analyzing the linear relationship between variables.

- Logistic Regression: Modeling probabilities for binary outcomes.

- Polynomial Regression: Capturing non-linear relationships.

- Ridge and Lasso Regression: Addressing multicollinearity and variable selection.

Key Strategies in Regression Modeling

- Data Preparation and Exploration

- Clean the Data: Handle missing values, outliers, and ensure data consistency.

- Understand Relationships: Use visualization tools to explore variable relationships.

Tip: Correlation matrices and scatterplots can help identify multicollinearity and initial patterns.

- Model Selection

- Match the model to your problem. For example, use logistic regression for classification tasks and ridge regression to handle overfitting in high-dimensional data.

- Leverage model evaluation metrics like R-squared, AIC, and BIC to compare performance.

- Feature Engineering

- Create New Features: Combine or transform existing variables for improved predictive power.

- Standardize or Normalize: Scale variables to ensure fair contributions to the model.

-

Addressing Multicollinearity

Multicollinearity occurs when independent variables are highly correlated, which can distort estimates. Address it through:- Dropping redundant variables.

- Using regularization techniques like ridge or lasso regression.

- Validation and Testing

- Split the data into training, validation, and testing sets.

- Use cross-validation to ensure model generalizability.

- Interpretability

- Keep the model understandable by minimizing unnecessary complexity.

- Use tools like partial dependence plots and feature importance rankings to explain model behavior.

Advanced Techniques to Improve Regression Models

- Regularization Methods: Employ ridge and lasso regression to shrink coefficients and enhance model stability.

- Interaction Terms: Capture relationships between variables by including interaction effects in the model.

- Non-linear Models: Use polynomial regression or generalized additive models (GAMs) for non-linear relationships.

- Automated Model Tuning: Leverage tools like grid search or Bayesian optimization to fine-tune hyperparameters.

Applications of Regression Modeling

Regression modeling has versatile applications:

- Healthcare: Predict patient outcomes or disease risks.

- Marketing: Optimize campaign performance by analyzing customer data.

- Finance: Forecast stock prices, credit risks, or economic trends.

- Manufacturing: Predict equipment failures and optimize production processes.

Challenges and Best Practices

Despite its power, regression modeling comes with challenges:

- Overfitting: Avoid models that perform well on training data but fail to generalize.

- Data Quality: Poor data can lead to inaccurate predictions.

- Bias-Variance Tradeoff: Balance model complexity to minimize prediction errors.

Best Practices:

- Always validate your model on unseen data.

- Regularly revisit the model as new data becomes available.

- Document assumptions and ensure ethical use of data.

Conclusion

Regression modeling strategies provide a structured approach to uncovering meaningful patterns and making reliable predictions. By combining data preparation, thoughtful model selection, and rigorous testing, you can create robust models that drive actionable insights. Whether you’re solving business challenges or advancing research, mastering these strategies is essential for success.

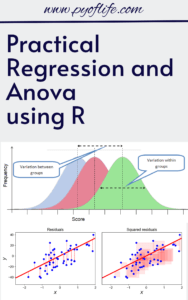

Download: Linear Regression Using R: An Introduction to Data Modeling