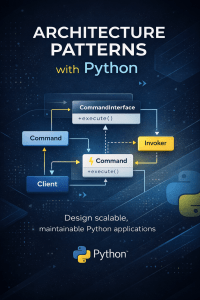

Introduction: Unveiling the Essence of Architecture Patterns with Python

In the dynamic landscape of software development, Architecture Patterns play a pivotal role in designing systems that are scalable, maintainable, and efficient. Python, a versatile and powerful programming language, seamlessly integrates with various architecture patterns to create robust software solutions. In this article, we will embark on a journey to explore the diverse range of architecture patterns that can be harnessed using Python. Whether you are a seasoned developer or an aspiring programmer, this guide will provide you with valuable insights into harnessing the potential of Python in architectural design.

1. Understanding Architecture Patterns with Python

Architecture Patterns are predefined solutions to recurring design problems. They guide the structure, organization, and communication of software components within a system. With Python’s flexibility and simplicity, developers can implement various architecture patterns to address different software design challenges.

2. The Power of Microservices Architecture

Microservices Architecture is a design paradigm where an application is composed of small, independent services that communicate with each other through APIs. Python’s lightweight nature and extensive libraries make it an ideal choice for building microservices-based applications.

3. Implementing the MVC Pattern

The Model-View-Controller (MVC) pattern is a popular architectural design used in web development. Python web frameworks like Django and Flask facilitate the implementation of MVC, enhancing code modularity and maintainability.

Architecture Patterns with Python

4. Leveraging the Event-Driven Architecture

Event-driven architecture revolves around the production, detection, consumption, and reaction to events. Python’s event-driven libraries enable developers to create responsive and real-time applications that react to various triggers efficiently.

5. The Flexibility of Layered Architecture

Layered Architecture divides an application into logical layers, each with specific responsibilities. Python’s readability and extensive libraries make it easy to implement layered architecture, fostering clean code organization.

6. Python in Domain-Driven Design (DDD)

Domain-Driven Design focuses on aligning software projects with the domain they operate in. Python’s expressive syntax and object-oriented capabilities support the implementation of DDD principles, resulting in more cohesive and understandable codebases.

7. Exploring the Hexagonal Architecture

The Hexagonal Architecture, also known as Ports and Adapters, emphasizes separating the core application logic from external components. Python’s dependency injection capabilities and modularity align well with this pattern, promoting code reusability.

8. Event Sourcing with Python

Event Sourcing involves capturing every change in an application’s state as a sequence of events. Python’s simplicity aids in implementing event sourcing, enabling developers to track and manage changes effectively.

9. CQRS: Command Query Responsibility Segregation

CQRS decouples the command (write) and query (read) responsibilities of an application. Python’s versatile ecosystem facilitates the implementation of CQRS, ensuring efficient data manipulation and retrieval.

10. Building Data Pipelines with Python

Data pipelines are crucial for efficiently processing and transferring data between systems. Python’s libraries like Apache Airflow simplify the creation and orchestration of data pipelines, making complex data workflows manageable.

11. The Resilience of the Circuit Breaker Pattern

The Circuit Breaker Pattern prevents system failures by handling faults and failures gracefully. Python’s exception-handling mechanisms, coupled with external libraries, enable the implementation of robust circuit breakers.

12. Applying the Repository Pattern

The Repository Pattern separates data access logic from the rest of the application. Python’s object-relational mapping (ORM) frameworks, such as SQLAlchemy, aid in implementing the repository pattern effectively.

13. Python and the Gateway Pattern

The Gateway Pattern centralizes external system interactions, simplifying communication and minimizing dependencies. Python’s standard libraries and third-party packages support the creation of gateway interfaces seamlessly.

14. Scaling Horizontally with the Load Balancing Pattern

Load Balancing distributes incoming traffic across multiple instances of a service to enhance performance and availability. Python’s multi-threading and multiprocessing capabilities contribute to building efficient load-balancing solutions.

15. Dockerizing Architectures with Python

Docker containers provide isolation and portability for applications. Python’s compatibility with Docker facilitates the packaging and deployment of complex architectures, ensuring consistency across different environments.

16. Security Considerations in Architectural Design

Security is paramount in architectural design. Python’s libraries and frameworks offer robust security mechanisms, making it easier to implement authentication, encryption, and secure communication.

17. Monitoring and Logging Strategies with Python

Effective monitoring and logging are essential for maintaining system health. Python’s libraries, along with third-party tools, aid in implementing comprehensive monitoring and logging solutions.

18. Façade Pattern Simplified with Python

The Façade Pattern offers a simplified interface to a complex subsystem of an application. Python’s expressive syntax and encapsulation capabilities make it a suitable choice for creating façades that enhance code usability.

19. Python’s Role in Cloud-Native Architecture

Cloud-native architecture emphasizes scalability and resilience in cloud environments. Python’s cloud-native libraries and integrations simplify the development of applications that harness cloud capabilities.

20. GraphQL Integration with Python

GraphQL revolutionizes API design by enabling clients to request precisely the data they need. Python’s GraphQL libraries facilitate the creation of flexible and efficient APIs that cater to diverse client requirements.

21. Event-Driven Microservices with Python

Combining event-driven and microservices architecture enhances application responsiveness. Python’s event-driven libraries and asynchronous programming support the creation of event-driven microservices.

22. Choosing the Right Architecture for Your Project

Selecting the appropriate architecture pattern depends on various factors. Consider your project’s requirements, scalability needs, and team’s familiarity with Python to make an informed decision.

23. Common Challenges and Solutions

Architectural design comes with its share of challenges. Explore common hurdles developers face when implementing architecture patterns with Python and discover effective strategies to overcome them.

24. Future Trends in Architecture Patterns with Python

The field of architecture patterns is ever-evolving. Stay updated with emerging trends, such as serverless architecture, edge computing, and AI integration, to harness Python’s capabilities for futuristic designs.

25. Conclusion: Empowering Software Design with Python

In conclusion, Architecture Patterns with Python form the backbone of well-structured and efficient software systems. Python’s adaptability, coupled with its rich ecosystem of libraries and frameworks, positions it as a versatile tool for architects and developers alike. By embracing the diverse range of architecture patterns covered in this article, you can unlock new dimensions of software design that optimize performance, scalability, and maintainability.

FAQs

Q: How does Python contribute to architectural design?

A: Python’s flexibility and extensive libraries make it an ideal choice for implementing various architecture patterns, enhancing software design.

Q: What is Microservices Architecture and its relation to Python?

A: Microservices Architecture involves building applications from small, independent services. Python’s lightweight nature and libraries facilitate the creation of microservices-based systems.

Q: Can Python be used for event-driven architecture?

A: Yes, Python offers event-driven libraries that enable the creation of real-time and responsive applications.

Q: How does the MVC pattern enhance web development with Python?

A: Python web frameworks like Django and Flask support the MVC pattern, improving code modularity and maintainability in web applications.

Q: What is the significance of security considerations in architectural design with Python?

A: Python’s libraries and frameworks provide robust security mechanisms, ensuring the implementation of secure authentication, encryption, and communication.

Q: How can developers choose the right architecture pattern for their projects?

A: Developers should consider project requirements, scalability needs, and team expertise to select the architecture pattern that aligns with their goals.

Download: Introduction to Python for Engineers and Scientists