Statistics and data visualization play crucial roles in analyzing and interpreting data, enabling us to gain valuable insights and make informed decisions. With the increasing availability of data, it has become essential to have effective tools and techniques to process and present data visually. Python, a versatile programming language, offers powerful libraries that make statistical analysis and data visualization accessible to both beginners and experienced professionals.

Introduction

In today’s data-driven world, businesses, researchers, and individuals need to harness the power of data to derive meaningful conclusions and drive growth. Statistics provides the foundation for understanding and summarizing data, while data visualization enables us to communicate complex information visually. Python, with its extensive set of libraries, has emerged as a popular language for performing statistical analysis and creating impactful data visualizations.

Importance of Statistics and Data Visualization

Enhancing data understanding and insights

Statistics allows us to explore data and uncover patterns, trends, and relationships. By applying statistical techniques, we can summarize large datasets, identify outliers, and gain a deeper understanding of the underlying distributions. This knowledge helps us make informed decisions and predictions based on evidence rather than intuition alone.

Data visualization complements statistics by representing data in a visual format. Visualizations help us perceive patterns and relationships that may not be apparent from raw data alone. Through graphs, charts, and interactive dashboards, we can communicate complex information more effectively, enabling stakeholders to grasp insights quickly and make data-driven decisions.

Supporting decision-making processes

Both statistics and data visualization are crucial for decision-making processes. Statistical analysis helps us evaluate hypotheses, test the significance of results, and quantify uncertainty. With statistical techniques, we can assess the effectiveness of interventions, identify factors influencing outcomes, and optimize strategies.

Data visualization provides an intuitive way to present data and results to decision-makers. It allows them to see trends, compare variables, and understand the impact of different scenarios. By conveying information visually, data visualization facilitates faster comprehension, leading to more informed and confident decision-making.

Python as a Powerful Tool for Statistics and Data Visualization

Python has gained popularity in the field of data science and analytics due to its simplicity, versatility, and extensive libraries. It offers a rich ecosystem of tools specifically designed for statistical analysis and data visualization. Python’s readability and user-friendly syntax make it accessible to users with varying levels of programming experience.

Key Python Libraries for Statistics and Data Visualization

To perform statistical analysis and create compelling data visualizations in Python, several key libraries are commonly used. These libraries provide a wide range of functionalities and make complex operations more accessible.

NumPy

NumPy is a fundamental library for numerical computing in Python. It provides powerful tools for working with arrays and matrices, enabling efficient and fast computation of mathematical operations. NumPy forms the foundation for many other libraries in the scientific Python ecosystem.

Pandas

Pandas is a versatile library that simplifies data manipulation and analysis. It provides data structures, such as dataframes, which allow for easy handling of structured data. Pandas offers a wide range of functionalities for filtering, aggregating, and transforming data, making it an essential tool for data preprocessing.

Matplotlib

Matplotlib is a popular data visualization library in Python. It offers a wide range of plotting functions and customization options, allowing users to create static, animated, and interactive visualizations. With Matplotlib, you can generate various types of charts, including line plots, bar plots, scatter plots, and histograms.

Seaborn

Seaborn is built on top of matplotlib and provides a high-level interface for creating visually appealing statistical graphics. It simplifies the creation of complex visualizations, such as heatmaps, violin plots, and pair plots. Seaborn also offers additional statistical functionalities, enhancing the analysis process.

Exploring Descriptive Statistics with Python

Descriptive statistics help us summarize and understand the characteristics of a dataset. With its statistical libraries, Python makes it straightforward to calculate descriptive statistics.

Mean, median, and mode

The mean represents the average value of a dataset, providing a measure of central tendency. The median represents the middle value, separating the higher and lower halves of the dataset. The mode identifies the most frequent value or values in the dataset.

Measures of dispersion

Measures of dispersion, such as the standard deviation and variance, indicate the spread or variability of data. They provide insights into the distribution of values and the degree of deviation from the mean.

Frequency distributions

Frequency distributions organize data into intervals or bins and display the number of occurrences or frequencies within each interval. Python’s libraries offer functions to calculate and visualize frequency distributions effectively.

Conducting Inferential Statistics with Python

Inferential statistics allow us to make inferences or draw conclusions about populations based on sample data. Python provides powerful tools for conducting inferential statistics.

Hypothesis testing

Hypothesis testing is a statistical method for making decisions or drawing conclusions about a population based on sample data. Python’s statistical libraries offer functions to perform hypothesis tests, such as t-tests and chi-square tests, allowing us to evaluate hypotheses and determine the statistical significance of results.

Confidence intervals

Confidence intervals provide a range of values within which we can expect the population parameter to fall with a certain level of confidence. Python’s libraries offer functions to calculate confidence intervals, helping us estimate the precision of our sample estimates.

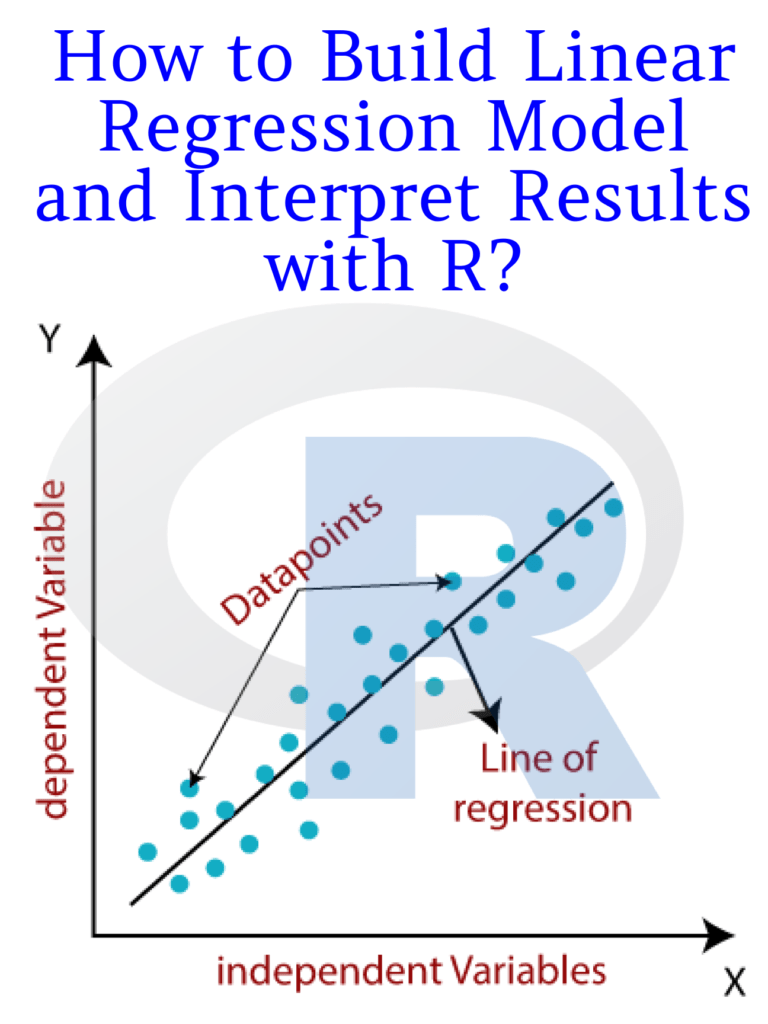

Regression analysis

Regression analysis allows us to model and analyze the relationship between variables. Python’s libraries provide a variety of regression models, such as linear regression, logistic regression, and polynomial regression. These models help us understand how variables interact and make predictions based on observed data.

Creating Effective Data Visualizations with Python

Python’s libraries offer powerful tools for creating impactful data visualizations that enhance data communication and storytelling.

Basic plots with Matplotlib

Matplotlib provides a wide range of plotting functions to create basic visualizations. With Matplotlib, you can generate line plots, bar plots, scatter plots, pie charts, and more. It also allows customizing colors, labels, and other visual elements to create visually appealing visualizations.

Advanced visualizations with Seaborn

Seaborn extends Matplotlib’s capabilities by offering higher-level functions for complex visualizations. It simplifies the creation of statistical graphics, such as box plots, violin plots, and swarm plots. Seaborn’s default styles and color palettes make it easy to create professional-looking visualizations.

Interactive Data Visualizations with Python

In addition to static visualizations, Python provides libraries for creating interactive data visualizations that engage users and allow for exploration and analysis.

Plotly

Plotly is a powerful library for creating interactive visualizations, including charts, graphs, maps, and dashboards. It offers a wide range of customization options and interactive features, such as hover effects, zooming, and panning. With Plotly, you can create interactive plots that respond to user interactions, providing a dynamic and engaging data exploration experience.

Bokeh

Bokeh is another popular library for interactive data visualizations in Python. It focuses on creating interactive visualizations for the web, allowing users to interact with data using tools like hover tooltips, zooming, and selection. Bokeh supports a variety of plot types and offers seamless integration with web technologies, making it suitable for creating interactive dashboards and web applications.

Real-World Applications of Statistics and Data Visualization with Python

The applications of statistics and data visualization with Python are vast and span across various industries and domains.

Business Analytics

In business analytics, statistical analysis and data visualization are instrumental in understanding customer behavior, and market trends, and optimizing business processes. Python’s libraries provide the necessary tools to analyze sales data, identify customer segments, and create visualizations that support strategic decision-making. By leveraging statistical techniques and visualizations, businesses can gain insights that drive growth and competitiveness.

Healthcare analytics

In healthcare, statistics and data visualization play a crucial role in analyzing patient data, identifying patterns, and improving healthcare outcomes. Python’s libraries enable healthcare professionals to perform statistical analysis on patient data, conduct epidemiological studies, and create visualizations that aid in disease surveillance and treatment evaluation. The combination of statistical analysis and data visualization enhances medical research, policy-making, and patient care.

Social sciences

In the social sciences, statistics, and data visualization are used to analyze survey data, conduct experiments, and explore social phenomena. Python’s libraries provide researchers with the tools to analyze large datasets, test hypotheses, and visualize data in meaningful ways. By using statistical techniques and visualizations, social scientists can uncover insights into human behavior, societal trends, and policy impacts.

FAQs

1. Is Python suitable for beginners in statistics and data visualization?

Absolutely! Python has a user-friendly syntax and a vast community that provides resources and support for beginners. With its intuitive libraries, such as NumPy, Pandas, Matplotlib, and Seaborn, Python makes it easier to learn and apply statistical concepts and create compelling visualizations.

2. Can I create interactive data visualizations with Python?

Yes, Python offers libraries like Plotly and Bokeh that specialize in creating interactive data visualizations. These libraries provide features like hover effects, zooming, and panning, allowing users to explore data and gain deeper insights interactively.

3. How can statistics and data visualization benefit businesses?

Statistics and data visualization help businesses make informed decisions based on data insights. By analyzing sales data, customer behavior, and market trends, businesses can optimize their strategies, improve customer satisfaction, and identify new growth opportunities.

4. What are some real-world applications of statistics and data visualization in healthcare?

In healthcare, statistics and data visualization are used for analyzing patient data, monitoring disease outbreaks, evaluating treatment effectiveness, and improving healthcare delivery. These techniques aid in identifying patterns, optimizing healthcare processes, and enhancing patient outcomes.

5. Where can I learn more about statistics and data visualization with Python?

There are various online resources, tutorials, and courses available that can help you learn statistics and data visualization with Python. Websites like DataCamp, Coursera, and YouTube offer comprehensive courses and tutorials that cater to different skill levels. Additionally, Python’s official documentation and online communities like Stack Overflow can provide valuable guidance and support.

Download: Practical Web Scraping for Data Science: Best Practices and Examples with Python