Data visualization is an essential part of data analysis. It helps us to understand data by providing visual representations of complex information. ggplot2 is a popular data visualization package in R, which is widely used by data scientists, statisticians, and researchers to create elegant and customizable graphs. In this article, we will discuss ggplot2 and its capabilities in data visualization.

What is ggplot2?

ggplot2 is an R package that is based on the principles of the Grammar of Graphics, a book written by Leland Wilkinson. The package is designed to create and customize graphs by breaking down the visual components of a graph into a set of grammar rules. The package includes a wide range of statistical graphics, including scatterplots, line charts, bar charts, histograms, and many more.

Advantages of ggplot2:

The following are some of the advantages of using ggplot2 for data visualization:

- Customization: ggplot2 provides a high level of customization, which allows users to modify the appearance of their graphs to meet their specific needs.

- Flexibility: ggplot2 is flexible and can be used to create a wide range of visualizations, including scatterplots, histograms, boxplots, and many more.

- Ease of use: ggplot2 is easy to use, with a simple syntax that allows users to create graphs quickly.

- Reproducibility: ggplot2 creates graphics that are highly reproducible, making it easier to share and replicate results.

Basic components of ggplot2:

ggplot2 graphs are built up from a set of basic components, including data, aesthetic mappings, geometric objects, scales, and facets.

- Data: ggplot2 requires data to be in the form of a data frame or a tibble. The data frame contains the variables to be plotted on the x and y-axes.

- Aesthetic mappings: Aesthetic mappings define how variables are mapped to visual properties of a graph, such as color, shape, and size.

- Geometric objects: Geometric objects are used to represent data points on the plot. Examples of geometric objects include points, lines, bars, and histograms.

- Scales: Scales are used to map data values to visual properties such as color or size.

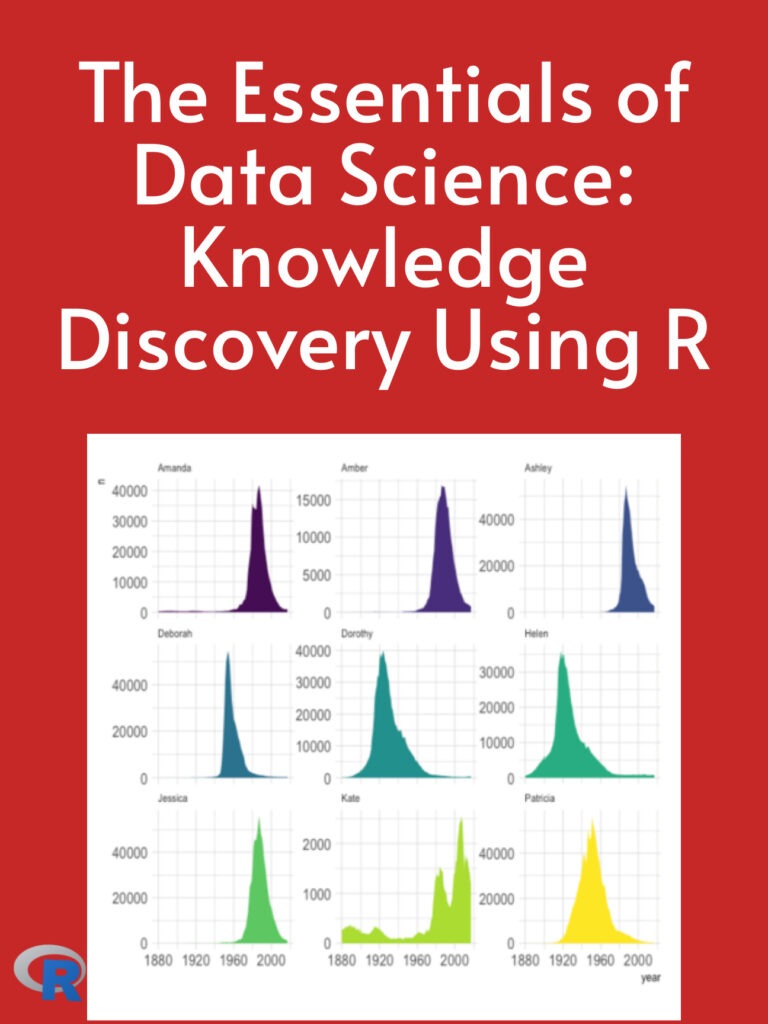

- Facets: Facets are used to split a plot into multiple panels based on a categorical variable.

Examples of ggplot2 graphs:

- Scatterplot:

A scatterplot is a graph that displays the relationship between two continuous variables. In ggplot2, a scatterplot can be created using the geom_point() function.

ggplot(data = iris, aes(x = Sepal.Length, y = Sepal.Width)) +

geom_point()

This code creates a scatterplot of Sepal Length against Sepal Width in the iris dataset.

- Bar chart:

A bar chart is a graph that displays the frequency or proportion of a categorical variable. In ggplot2, a bar chart can be created using the geom_bar() function.

ggplot(data = diamonds, aes(x = cut)) +

geom_bar()

This code creates a bar chart of the cut of diamonds in the diamonds dataset.

- Line chart:

A line chart is a graph that displays the change in a continuous variable over time or another continuous variable. In ggplot2, a line chart can be created using the geom_line() function.

eggplot(data = economics, aes(x = date, y = unemploy)) +

geom_line()

This code creates a line chart of unemployment over time in the economics dataset.