Econometrics is a branch of economics that uses statistical and mathematical methods to analyze economic data. It is an important tool for economists and policymakers to make informed decisions about economic policies and forecast economic outcomes. R is a programming language widely used in econometrics to analyze, visualize, and interpret data. In this article, we will provide an introduction to econometrics with R. We will discuss the basic concepts of econometrics and how R can be used to apply these concepts.

Download:

What is Econometrics?

Econometrics is the application of statistical methods to economic data to test economic theories and forecast economic outcomes. It is used to estimate the relationships between economic variables, such as price and quantity, income and expenditure, and interest rates and investment. Econometrics uses statistical models to describe the relationships between these variables and to make predictions about future economic behavior.

Econometrics involves three steps:

- Specification: This involves defining the economic theory and the variables that will be used to test it.

- Estimation: This involves estimating the parameters of the model using statistical methods.

- Evaluation: This involves testing the validity of the model and the accuracy of the predictions.

R and Econometrics

R is a popular programming language used in econometrics because of its versatility and its ability to handle large and complex datasets. R provides a wide range of functions for econometric analysis, including linear regression, time-series analysis, panel data analysis, and non-parametric analysis.

R also provides a wide range of visualization tools, including graphs, charts, and tables, to help economists and policymakers understand economic data and make informed decisions.

Using R for Econometric Analysis

To use R for econometric analysis, you will need to install the relevant packages for your analysis. There are several packages available for econometric analysis, including:

- plm: This package is used for panel data analysis.

- lmtest: This package is used for hypothesis testing of linear regression models.

- tsDyn: This package is used for time-series analysis.

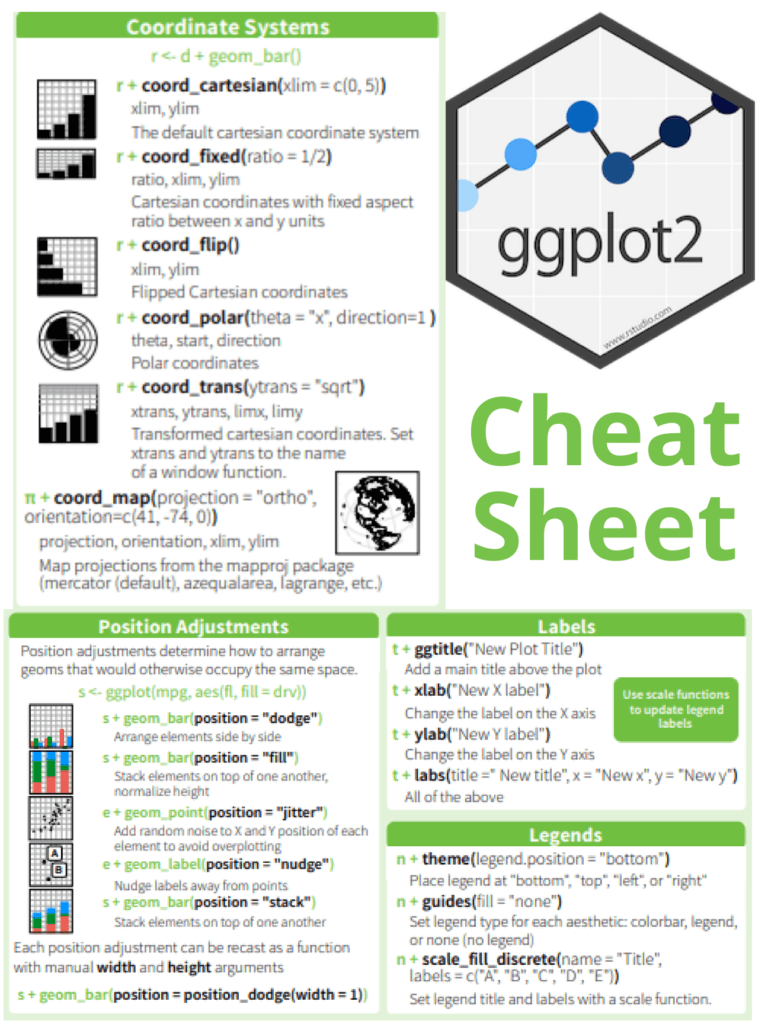

- ggplot2: This package is used for data visualization.

Once you have installed the relevant packages, you can start using R for econometric analysis. Here are some basic steps:

- Load the data: You can load data into R using various methods, including CSV files, Excel files, or SQL databases.

- Clean and preprocess the data: This involves removing missing values, and outliers, and transforming the data if necessary.

- Model specification: This involves defining the economic theory and the variables that will be used to test it.

- Estimation: This involves estimating the parameters of the model using statistical methods.

- Evaluation: This involves testing the validity of the model and the accuracy of the predictions.

- Visualization: This involves creating graphs, charts, and tables to help understand and communicate the results of the analysis.