R is a popular open-source programming language that is widely used for statistical computing, data analysis, and data visualization. It offers a wide range of tools and libraries for working with data and has become increasingly popular among data scientists and statisticians. If you are an Excel user who is interested in learning R, you might be wondering how to get started. In this article, we will provide an introduction to R for Excel users, including the benefits of using R, the differences between R and Excel, and tips for transitioning to R.

Benefits of using R

R offers several advantages over Excel, including:

- Large data handling: R is designed to handle large datasets with ease. It is capable of handling datasets that are too large to fit in Excel, and can handle more complex data structures.

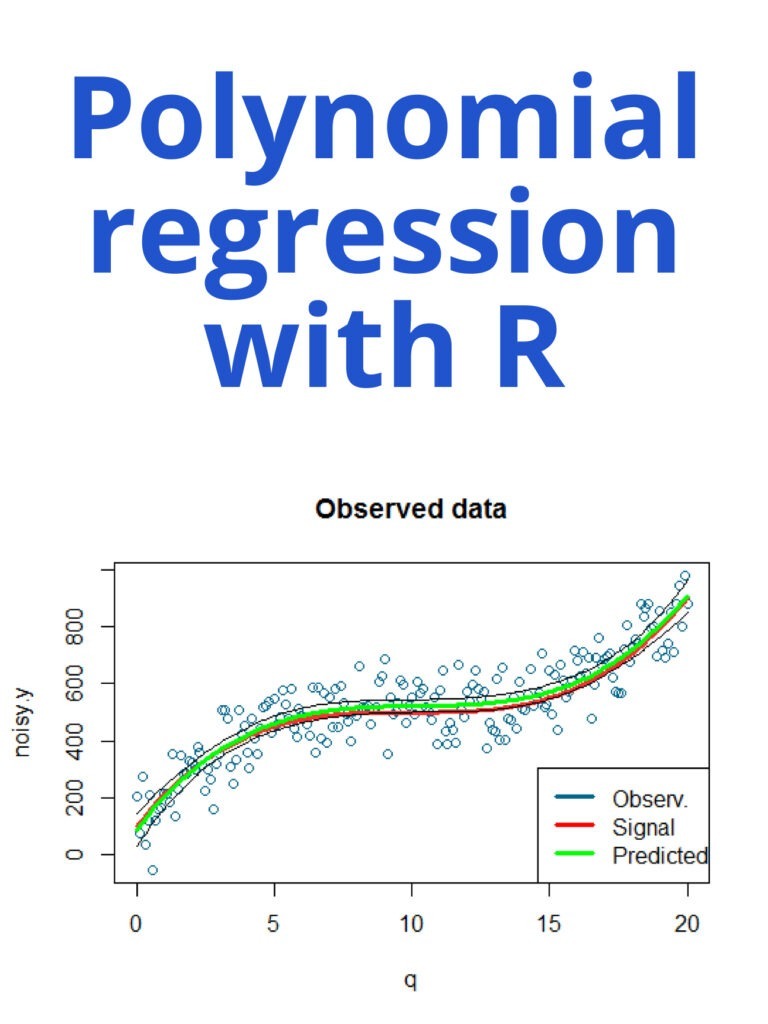

- Powerful statistics: R has a vast range of statistical analysis capabilities built-in. R provides a powerful set of statistical and graphical techniques, including linear and nonlinear modelling, time-series analysis, classification, clustering, and more.

- Open-source community: R is an open-source language, which means it is continuously developed by an active community of users. This community provides a wealth of resources and support, including libraries, packages, and forums.

- Reproducible research: R makes it easy to create reproducible research by documenting every step of your analysis. This ensures that your results are transparent and easily replicable.

Differences between R and Excel

While Excel is a powerful tool for working with data, it has its limitations. Excel is designed for small to medium-sized datasets and is not well-suited to handling complex data structures. Here are some key differences between R and Excel:

- Data Structures: In Excel, data is usually stored in tables with columns and rows. R has many more data structures, such as vectors, matrices, arrays, and data frames. Each data structure can handle different types of data and operations.

- Functions: In Excel, functions are pre-built formulas that perform specific tasks on data. In R, functions are built into the language and can be easily extended using packages. R provides a wide range of built-in functions and packages for statistical analysis and data manipulation.

- Programming: R is a programming language, while Excel is a spreadsheet program. This means that R requires you to write code to perform tasks, while Excel requires you to manually enter data and use pre-built functions.

Tips for transitioning to R

If you are an Excel user who is interested in learning R, here are some tips to help you get started:

- Learn the basics: Start by learning the basics of R, including data structures, functions, and programming concepts. There are many online resources available, including tutorials and videos.

- Start with small datasets: Start by working with small datasets to get comfortable with R. As you gain more experience, you can move on to larger and more complex datasets.

- Use RStudio: RStudio is a popular integrated development environment (IDE) for R. It provides an easy-to-use interface for writing and running code, as well as tools for data visualization and exploration.

- Use packages: R has a vast range of packages that can be used to extend its functionality. Start by learning the most commonly used packages for data manipulation and statistical analysis, such as dplyr and ggplot2.

- Practice: Practice is key to becoming proficient in R. Try working on small projects, participating in online communities, and contributing to open-source projects to improve your skills.